How To: Job Chaining in Compressor

There are aspects of Compressor I don’t take full advantage of, and until recently one of them was job-chaining. Job-chaining is probably something that isn’t used a whole lot but becomes extremely handy in certain workflows. I’m sure there are plenty of use cases, so I’ll explain mine and allow you to draw your own conclusions. I noticed that my last job-chaining blog post gets a lot of traffic, so somebody out there is searching for this. Or it’s robots.

In my day-to-day, I commonly need to encode three versions of a video to post in a proprietary video library. The video player uses dynamic bitrate switching to choose the version used based on the user’s internet connection. When I produce a video, I typically have a 720p ProRes master, and then three other H.264 versions with different frame sizes and a lower frame rate.

I found that doing the resizing, frame rate change, and bitrate all at once took forever. Something about throwing all those settings at once into a transcode really trips Compressor up. Encoding a couple of minutes of video was taking an hour or more. Going from a high performance chunky codec like ProRes to the efficient but very complex H.264 (with frame controls) was mind-numbing. I’m sure I’d be pissed too if I had to do all kinds of resizing, reordering, and and rearranging of frames. It’s a lot of processing to do all at once if you consider how the two codecs work.

My solution was job-chaining. Basically, Compressor allows you to set up a job, throw some settings on it, then use the result of that job as the target of another job with different settings, all within one batch.

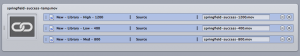

This was a solution because it splits up what seems to make Compressor freak out. In the first round of encoding, the ProRes 422 master file is encoded to another ProRes 422 – everything is exactly the same as the original file, except for the frame size (and frame rate if I’m reducing that.) This goes quickly, and there is no noticeable quality change. The files appear identical to me, just smaller and with a lower frame rate, obviously. Then, that resized file goes automatically to the second step, where there are three different outputs – 400, 800, and 1200 kbps files. All that happens in this set is the actual compression of the files. Doing these steps separately takes just a few minutes, instead of nearly an hour of encoding.

Could I do this in two steps? Obviously. But this assures that it’s done exactly the same way every time, with the correct files. If I did them as separate steps, that’s two batches to set up, or I could get distracted and forget where I left off. It’s nice to drop one file and be done with it. Of course, I’m sure there are better use cases, but this is what works for me. I’m going to experiment with it more because it seems we’re not going to be reducing frame rates anymore (yay). Am I causing unnecessary quality loss? Maybe (probably) but it’s not noticeable in this use, where I’m pushing a lot of content up that isn’t usually isn’t highly complex.

How to Use Job Chaining in Compressor:

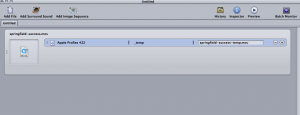

To set up a job-chaining job, open Compressor and drop in the file you want to use.

Place the settings you’d like the first Target to have. Compressor does output this file for you to keep even though it passes it to another job, so that could be very useful to your workflow.

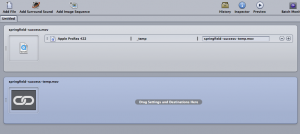

Click on the Settings for the first job. Then go to Job > New Job with Target Output

A new Job will appear with a chain symbol, and the source is automatically filled in.

Now you can drag Settings onto this Job.

Then Submit the job. You’ll see Compressor work through Job 1, then Job 2.

Ta-da, you now have two files (or more, if like me, you create 3 files in the second Job). Honestly, this is more of a “hey this is a thing” than a real “how to” since it’s so simple to set up.

Compressor is kind of a piece of crap, but if you know how to work it, it can work pretty nicely for you.

Hopefully this blog post is a little more helpful than my last ramble one about job-chaining. By the way, if anyone has any comments on this – why I’m dumb, something I’m missing, or if you want to tell me I’m a gorgeous genius – I’d love to hear it. I’m no encoding god, I’m just tryin’ to make my way. Good day, robots.